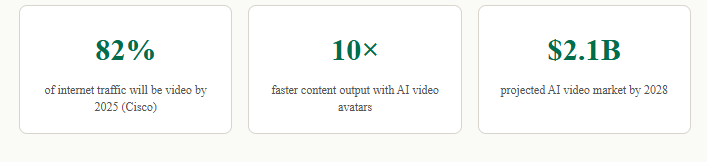

The rise of the AI twin generator is rewriting the rules of content production – enabling creators, brands, and educators to publish at scale without ever stepping in front of a camera again.

Imagine waking up to find your digital self has already published three training videos, two product demos, and a personalized welcome message — all while you slept. This is no longer a futuristic fantasy. Thanks to the modern AI twin generator, round-the-clock video content creation has become a practical reality for businesses and solo creators alike.

What is an AI twin generator?

An AI twin generator is a technology platform that creates a photorealistic, voice-matched digital replica of a real person — capable of speaking, gesturing, and delivering content on video entirely through artificial intelligence. Unlike simple deepfakes or avatar tools, a true AI twin learns your facial micro-expressions, vocal cadence, emotional inflections, and even your signature hand gestures from a short training session.

Once built, this digital version of you can be prompted to deliver any script, in any language, at any time — without you needing to book a studio, apply makeup, or wait for perfect lighting. Platforms like HeyGen, Synthesia, Tavus, and D-ID have pioneered this space, but a new generation of tools is pushing personalization and realism far further than anything seen before.

The term “AI twin” is significant because it implies parity — not just a lookalike, but a digital agent that represents you authentically enough to be trusted by your audience. This distinction matters deeply for brand credibility and viewer engagement.

How AI twin technology works

The pipeline behind an AI twin generator involves several sophisticated layers of machine learning working in concert:

- Neural face modeling: Trained on hours (or minutes, in newer systems) of source video, a 3D mesh of your face is built and animated using neural rendering techniques like NeRF (Neural Radiance Fields).

- Voice cloning: Text-to-speech models are fine-tuned on samples of your voice, capturing tone, pacing, and accent with uncanny accuracy.

- Lip-sync synthesis: Audio output is precisely mapped to lip and jaw movements using audio-driven facial animation, ensuring natural speech that matches the generated voice.

- Body and gesture generation: Advanced systems model upper body movement, maintaining natural head nods, blinks, and subtle shifts that prevent the “uncanny valley” flatness of older avatars.

- Background and scene composition: AI-generated environments or green-screened backdrops are composited in real time, letting your twin appear in any setting imaginable.

The result is a video that, to most viewers, is indistinguishable from the real person — right down to the characteristic way they pause before making a key point.

Why 24/7 video creation is now a reality

The economics of traditional video production have always imposed hard constraints on scale. A human presenter needs breaks, travel, preparation, and re-shoots. Post-production adds hours of editing per minute of final footage. A single corporate training video can cost thousands of dollars and take weeks from concept to delivery.

An AI twin generator collapses this timeline dramatically. Once the digital twin is created, producing a new video becomes as simple as typing a script and clicking render. Most platforms deliver a finished, polished video in minutes. This means a content team that previously released two videos a week could theoretically release twenty — or two hundred.

For global organizations, the implications are even larger. An AI twin can simultaneously deliver the same message in Spanish, Mandarin, Hindi, and Arabic — localized not just in language but in lip movement — all from a single English script. No international film crews, no localization agencies, no scheduling conflicts across time zones.

This is why “24/7 video creation” is not merely a marketing promise. It is the genuine operational reality enabled by mature AI twin platforms today.

Key benefits for creators and brands

1. Massive reduction in production costs

Studio time, lighting rigs, camera operators, video editors — these expenses evaporate when your AI twin handles on-screen delivery. Brands report cost reductions of 60–80% on explainer and training video production after adopting AI twin generators.

2. Consistent brand voice and presentation

Human presenters have bad days. They get sick, mispronounce words, or subtly shift their communication style over time. An AI twin delivers your brand voice with perfect consistency across every single video — same energy, same cadence, same professional presentation at 3 AM on a Tuesday as at 10 AM on a Monday.

3. Rapid personalization at scale

One of the most powerful emerging use cases is hyper-personalized video. Sales teams are using AI twin generators to send individual prospects a video that includes their name, their company, and specific pain points — all automatically generated from CRM data. What previously took a sales rep an hour per video now happens in seconds per thousand contacts.

4. Multilingual content without re-filming

As noted earlier, language localization has historically required re-filming or expensive dubbing. AI twin systems render native-tongue lip movements for any target language, enabling genuine multilingual video content at near-zero marginal cost.

5. Creative freedom and experimentation

When video production no longer requires scheduling a shoot, creators experiment more freely. New formats, rapid A/B testing of messaging, seasonal variations, and topical updates can all be published without the inertia of traditional production cycles.

AI-Powered Social Commerce & Video Content Platform

Expert Opinion

AI twin generators are fundamentally shifting the relationship between content volume and human bandwidth. At Tagshop AI, we’re seeing firsthand how brands that adopt AI twin technology are not just producing more video — they’re producing smarter video. The ability to generate personalized, shoppable video content at scale, tied directly to product feeds and real-time inventory, is something that was simply impossible two years ago. What excites us most is not the automation itself, but what automation unlocks: creative teams redirecting their energy from logistics toward strategy, storytelling, and genuine audience connection. The AI twin generator doesn’t replace the human creator — it liberates them.

— Tagshop AI Product Team, 2025. Tagshop AI is a leading platform combining user-generated content, AI video tools, and social commerce to help brands grow through authentic, scalable video experiences.

Use cases across industries

E-learning and corporate training

Training departments are among the fastest adopters of AI twin generators. A compliance training module that once required a full production day can now be updated in an afternoon — critical when regulations change and every employee needs re-certified quickly. L&D teams create instructor-led courses featuring a realistic AI twin of a subject matter expert, scaled across dozens of modules without ever pulling that expert away from their actual work.

Marketing and social media

Content marketers using AI twin generators report the ability to maintain daily video publishing schedules on YouTube, LinkedIn, TikTok, and Instagram simultaneously — something that was previously sustainable only for large media companies with dedicated teams. Brands deploy their AI spokesperson twin to cover product launches, seasonal promotions, trend commentary, and FAQ content in real time.

Healthcare and patient education

Medical organizations use AI twin generators to produce patient education videos in multiple languages, featuring a trusted physician’s digital twin. This extends the reach of expert medical communicators without consuming their clinical time, delivering high-quality health literacy content to underserved populations.

Customer support and onboarding

SaaS companies embed AI twin videos inside product onboarding flows — a hyper-personalized welcome from a realistic digital version of the CEO or Customer Success lead, generated dynamically for each new user. This warm human touch, delivered at machine scale, measurably improves activation rates.

Retail and e-commerce

Shoppable video is one of the hottest growth areas in e-commerce, and AI twin generators are accelerating it. Retailers generate product demonstration videos featuring a brand ambassador’s AI twin for every SKU in their catalog — something that would require years of filming at traditional production speeds.

Limitations and ethical considerations

No technology this powerful arrives without serious responsibilities attached. Responsible use of an AI twin generator demands transparency: viewers should ideally know when they are watching a digital twin rather than a live recording. Most reputable platforms require explicit consent from the person being twinned, and watermarking or disclosure labels are increasingly standard practice.

There are also current technical limitations worth acknowledging. Emotional nuance in highly complex conversations — genuine empathy, spontaneous humor, improvised responses to unexpected questions — remains difficult to replicate convincingly. AI twins still struggle with extreme lighting conditions, unusual angles, and highly expressive performances. These gaps are narrowing rapidly, but they are real.

Deepfake misuse is the shadow side of this technology. Without strong platform governance and societal awareness, AI twin generators could be weaponized for fraud, disinformation, or non-consensual impersonation. The most responsible platforms invest heavily in consent verification, watermarking standards, and detection tooling as core product features rather than afterthoughts.

Frequently asked questions

What is an AI twin generator used for?

An AI twin generator is used to create a realistic digital video replica of a person that can deliver scripted content on-camera without any filming. Common uses include corporate training, marketing videos, multilingual content, personalized sales outreach, and customer onboarding.

How long does it take to create an AI twin?

Most modern platforms require between 2 and 10 minutes of source video footage to generate an AI twin. The initial processing takes anywhere from a few hours to 24 hours depending on the platform, after which video generation from scripts takes only minutes.

Is it legal to use an AI twin generator?

Reputable AI twin generator platforms require explicit consent from the person being digitized and prohibit the creation of twins of third parties without their permission. Legal use requires consent, and many jurisdictions are developing specific legislation around synthetic media.

Can an AI twin speak multiple languages?

Yes. Leading AI twin generators support dozens of languages and can render realistic lip-sync in each language from a single source model, making multilingual video content production highly cost-effective.

What is the difference between an AI avatar and an AI twin?

An AI avatar is typically a generic or stylized digital character. An AI twin is a photorealistic, voice-matched digital replica of a specific real person, trained on their actual appearance and voice to produce highly authentic results.

Leave a comment